04. Why AI Fluency Isn't a Training Problem

When design leaders talk about building AI fluency on their teams, the conversation almost always ends up in the same place: training.

Build a curriculum, run workshops, bring in an expert, send people to a course, create a certification path and measure completion rates.

What if the training isn’t failing because it’s bad? What if training is just the wrong solution to the actual problem?

The actual problem isn’t that designers don’t know how to use AI. The State of AI in Design report found that 96% of designers using AI today are self-taught. They figured it out on their own, without a curriculum, without a certification, without anyone measuring their completion rate. The knowledge exists. It’s spreading on its own.

What isn’t spreading on its own is the confidence to use what you know. The organizational permission to experiment without penalty. The safety to try something in front of your team and have it not work and not have that mean something bad about you.

That’s not a training problem. That’s a culture problem. And it requires a different intervention.

What Actually Gets in the Way

When you ask designers why they aren’t using AI more in their work, the answers cluster around a few things.

They don’t know if it’s allowed. Not in a policy sense, but in an unspoken cultural sense. Will using AI on this project be seen as cutting corners? Will my manager wonder if the work is really mine? Is there an official tool, and am I supposed to wait for it? What is the right process?

They don’t want to look like they don’t know what they’re doing. AI tools are unfamiliar. Prompting is a skill that takes time to develop. In an environment where competence is visible and mistakes are noticed, the rational move is to stick with what you know.

The moment that changes everything hasn’t happened yet. Most designers who become enthusiastic AI users can point to a specific moment: the first time a prompt generated something that actually surprised them, the first time synthesis that would have taken two days took two hours. Before that moment, AI is abstract. After it, it’s a tool they reach for. The problem is that without the right conditions, that moment never happens.

Training doesn’t fix any of these things. A workshop teaches you how the tool works, but it doesn’t give you permission, restore psychological safety, or create the first genuine “aha” moment. Those come from culture.

What Works Instead

The organizations making the fastest progress have figured out three things.

Grant Permission Publicly

Not a policy document. A signal from leadership that experimentation is valued over polish in early-stage work, that trying AI and failing is more useful than not trying at all, that the goal right now is learning and the team’s job is to generate that learning as fast as possible. This sounds simple. It is rarely done. Most design cultures are implicitly oriented toward demonstrating competence, not demonstrating curiosity. Changing that orientation requires explicit intervention from whoever leads the team.

Design Practice As Play

Figma ran something called the Great Figma Bake Off: a company-wide competition where teams built projects using AI tools, with live jam sessions across time zones and public showcasing of what people made. Nobody was evaluated on the quality of the output. The whole point was to get hands on the tools in a context where failure was interesting rather than consequential. Atlassian ran their AI Product Builders Week on a similar premise: a week of building, sharing, and learning together, with the outputs shared publicly inside the company. Over a thousand designers participated. The tool was never the point. The shared experience of trying together was.

Cultivate Champions

In every organization that has built genuine AI fluency at scale, there’s a small group of people who went deeper earlier. Not because they were assigned to. Because they were curious, or stumbled into the right project, or had a manager who gave them room to explore. These people become the informal infrastructure for fluency-building: the ones colleagues come to when they’re stuck, the ones who share what they’re learning in Slack channels or design crits, the ones who help others find their first “aha” moment. Organizations that identify these people and support them intentionally, rather than accidentally, accelerate the whole team’s development. Six to ten percent of the team with genuine depth is enough to change the culture.

The Mandate Trap

It’s worth being direct about what doesn’t work, because it’s the most common approach.

Mandating AI tool adoption doesn’t build fluency. It builds compliance. Designers who are required to use AI will find the path of least resistance: they’ll use it in ways that are easy to demonstrate, that check the box, that don’t require them to actually change how they work. The learning that comes from genuine experimentation, from trying something because you’re curious and then following where it leads, doesn’t happen under mandate.

Measuring adoption doesn’t build fluency either. If the metric is “what percentage of the team used an AI tool this week,” you’ll get the metric. You won’t get the change in practice that makes the metric meaningful.

The organizations that measure fluency well are measuring different things: the quality of outputs over time, the rate at which AI-assisted work is replacing lower-value manual work, the diversity of use cases the team is exploring. Those are lagging indicators, but they’re the right ones.

The Deeper Thing

There’s something underneath all of this that’s worth naming.

Design teams that build genuine AI fluency do it because their leaders are curious about AI themselves. Not performing curiosity. Not requiring it of others while remaining personally detached. Actually using the tools, sharing what they found, showing the team that the leader is also in the middle of figuring this out.

The signal that matters most isn’t the training budget or the completion certificate. It’s whether the person at the top of the design org treats AI as a live question they’re figuring out alongside the team, or as a mandate they’re delivering from a distance.

Teams follow what leaders do, not what they ask for. No certification path substitutes for that. That’s not specific to AI. It’s just how culture works.

Leslie Sultani is a design leader writing about the intersection of AI, design practice, and organizational change.

Further Reading

State of AI in Design 2025 — Foundation Capital & Designer Fund. The source for the finding that 96% of designers using AI today are self-taught, and the data on where adoption is and isn’t happening.

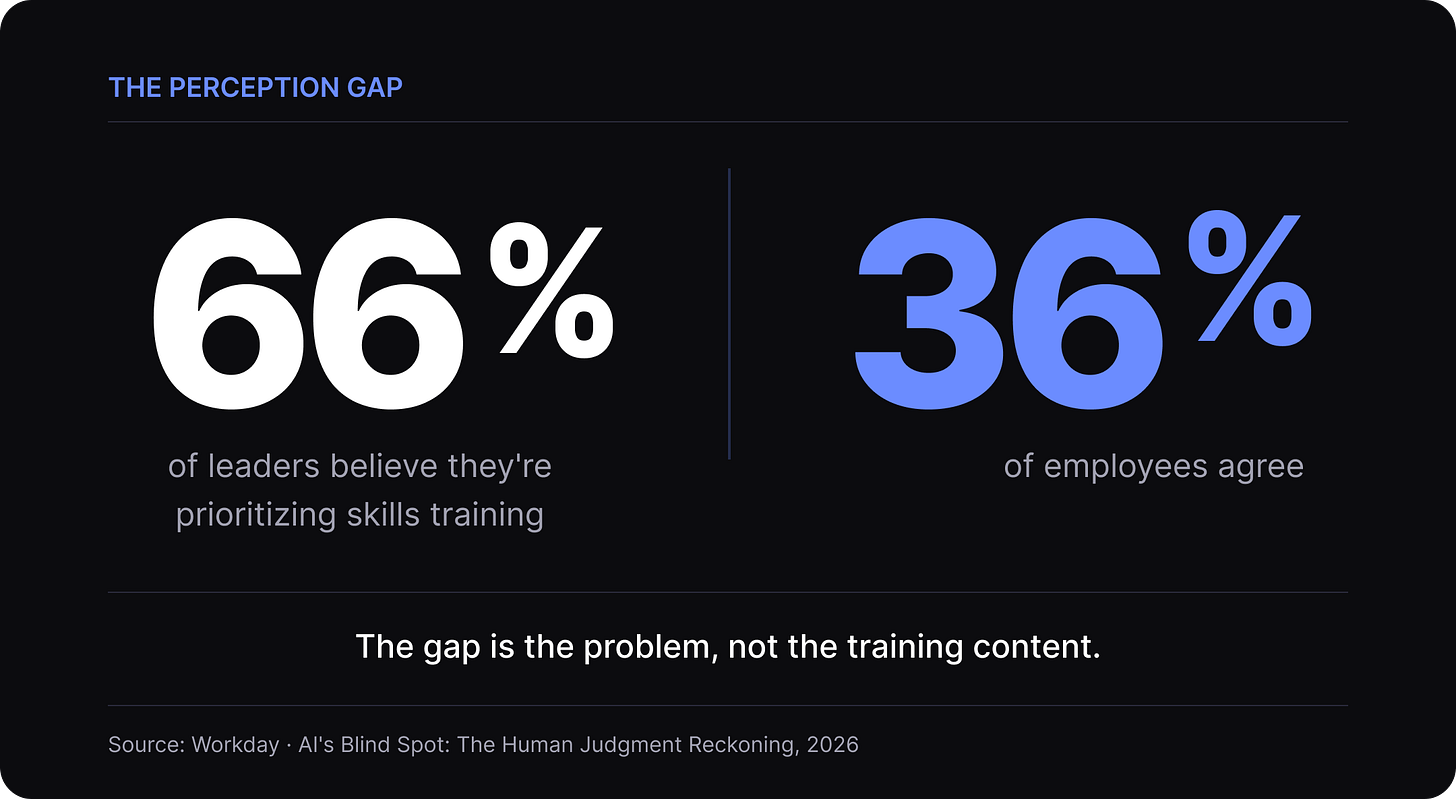

AI’s Blind Spot: The Human Judgment Reckoning — Workday. Survey data that makes the culture-not-curriculum argument concrete: 66% of leaders believe they are prioritizing skills training; only 36% of employees agree. The perception gap is the problem, not the training content.

How Do Workers Develop Good Judgment in the AI Era? — David S. Duncan, Harvard Business Review. The finding that AI helped experienced practitioners more than less-experienced ones because judgment is the bottleneck, not information access. Directly explains why training programs solve the wrong problem and what organizations need to do instead.

AI Product Builders Week: How Hands-On Experimentation Is Shaping Atlassian’s Future — Atlassian’s account of the week-long program where over a thousand employees built with AI tools together. The model behind the structured experimentation approach in this article.

A Design Technologist’s Take on AI Builders Week — Atlassian. A practitioner’s perspective from inside the program, on what actually changes when teams build together with AI rather than train in isolation.

Strategy Summit 2026: Why AI Means Radical Change — Tsedal Neeley, HBR IdeaCast. Introduces the “30% rule”: every worker needs baseline AI fluency, not just technical teams. Treats fluency as a culture and organizational change problem, not a skills gap.

Strategy Summit 2026: Inventive Strategy and the ‘Unbossed’ Organization — Rita McGrath, HBR IdeaCast. The electricity analogy: you wouldn’t create an “electricity strategy,” you’d give people tools to experiment. Directly parallels the permission argument in this article.

5 Design Skills to Sharpen in the AI Era — Figma. What skills teams should be building fluency in, based on research across the design industry.