03. The Framework for Knowing Where Human Judgment Still Lives

One of the hardest things about working with AI in a design practice isn’t the tools. It’s the decisions that don’t come with instructions.

Should a designer review every screen an AI generates, or just the ones that feel risky? Who signs off when AI suggests a pattern the design system doesn’t cover yet? When a product team wants to move fast and let AI handle a component, how do you know if that’s fine or if it’s the kind of shortcut that surfaces three months later as a trust problem?

Most teams wrestle with these questions ad hoc. Someone pushes back, or nobody does, and over time the team develops an informal sense of where AI is trusted and where it isn’t. The problem with informal is that it’s inconsistent. Different designers make different calls. Different product managers have different risk tolerances. And the accumulated effect of hundreds of small decisions made without a shared framework is a design practice that doesn’t actually know what it believes about AI.

There’s a better starting point. Two questions, asked about any piece of work, sort out most of it.

The Two Variables That Actually Matter

The first question: how high are the stakes if this goes wrong?

Stakes here means the real cost of a bad outcome. Not just a design critique. Not “the PM won’t like it.” Stakes means: could this make users feel like you’re lying to them? Could it expose the company to legal or regulatory risk? Could it put a dent in the brand that takes years to fix? Could it fail someone who’s already frustrated and just needs the “Cancel” button to work?

High-stakes work includes anything involving how users make important decisions, anything that touches personal data or sensitive information, anything where the experience of failure is significant for the person going through it.

Low-stakes work includes visual exploration, copy variations, early component builds, annotation, first-draft documentation. The cost of AI getting it wrong is iteration time, not user harm.

The second question: how novel is this problem?

Novelty means how much established pattern or precedent exists for what you’re designing. A standard notification component for a well-understood interaction is low novelty. A new feature that asks users to trust AI with a consequential decision is high novelty. There’s no precedent in your system, no established pattern to reference, no historical data about how your users will respond.

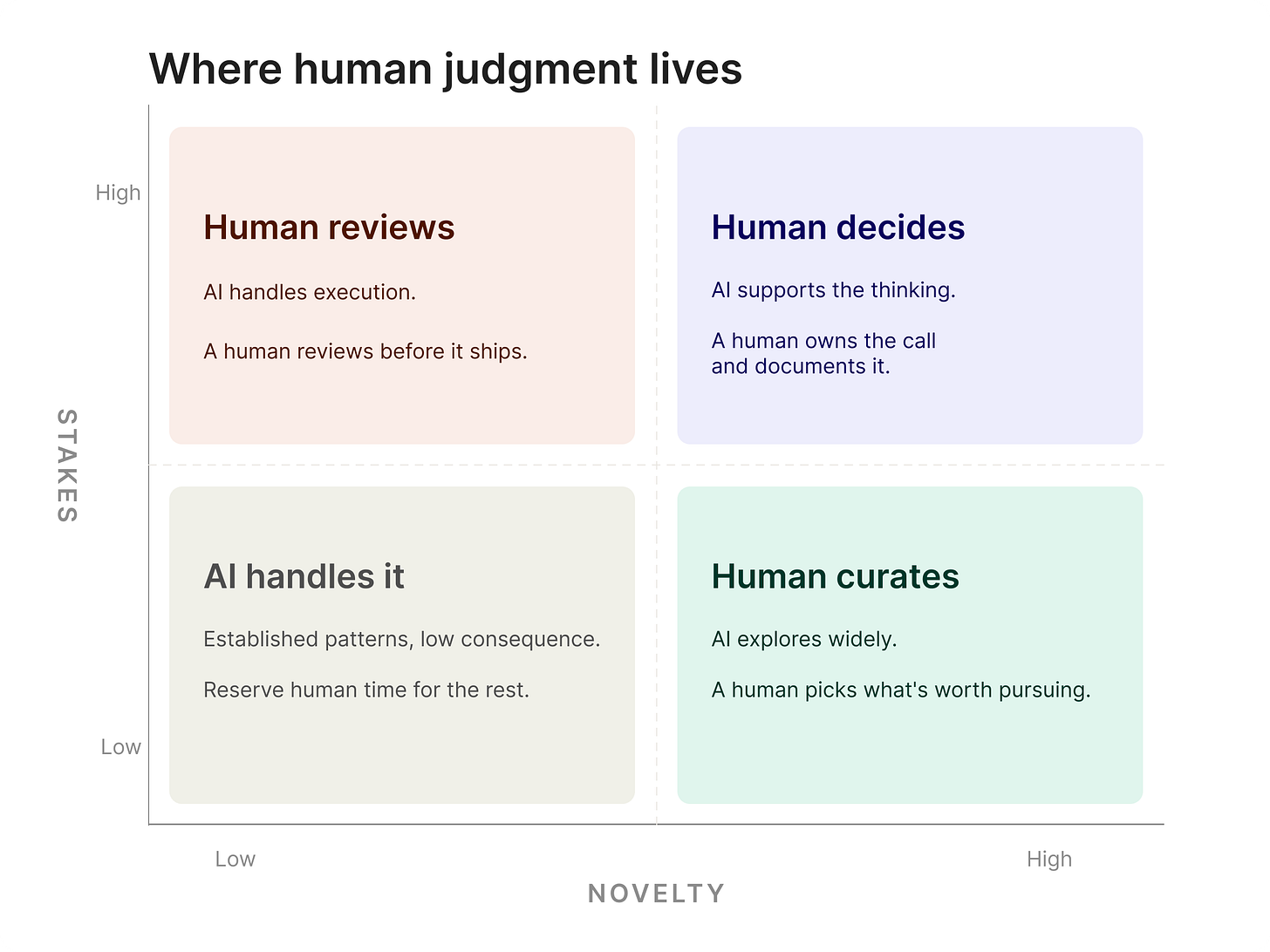

When you map these two variables against each other, four zones emerge, and each one calls for a different relationship between human judgment and AI.

The Four Zones

High stakes, high novelty. This is where human judgment is not optional. New product directions. Features that touch how users understand what AI is doing on their behalf. Experiences that carry legal or ethical exposure. Trust-critical moments where the wrong design decision isn’t just a UX failure but a breach. AI can do useful work here, mostly pulling together research and generating options for the team to react to. But a human decides, and that decision is documented and owned.

High stakes, low novelty. This is the zone that looks clean on paper and gets messy in practice. The pattern exists and the interaction is well understood, but the consequences of getting it wrong are real. In theory, AI handles execution and a human gatekeeps the output before it ships. Not a quick glance. A real review, with the stakes explicitly in mind.

In practice, this is where most teams trip up. I’ve watched it happen. The work feels like a slog, so the review becomes a slog. Someone skims the output, it looks close enough, and it ships. The problem is that “close enough” in a high-stakes context is how you end up with an accessibility failure in a payment flow or a disclosure screen that technically meets the legal requirement but confuses every real person who reads it. The pattern being familiar is exactly what makes the risk easy to underestimate. Teams that get this zone right treat the review as a deliberate act, not a formality.

Low stakes, high novelty. This is where you let AI throw things at the wall. The problem is new enough that exploring widely is valuable, and the consequences of a wrong direction are low enough that you can afford to generate, react, and toss quickly. A designer’s job here is picking what’s actually worth keeping, and being able to say why. That judgment is still human. The generation is not.

Low stakes, low novelty. Let AI do it. Established patterns, understood interactions, low consequence if the first draft isn’t perfect. Offloading this work to AI and protecting human attention for the other three zones is how a design team actually scales without sacrificing quality where quality matters.

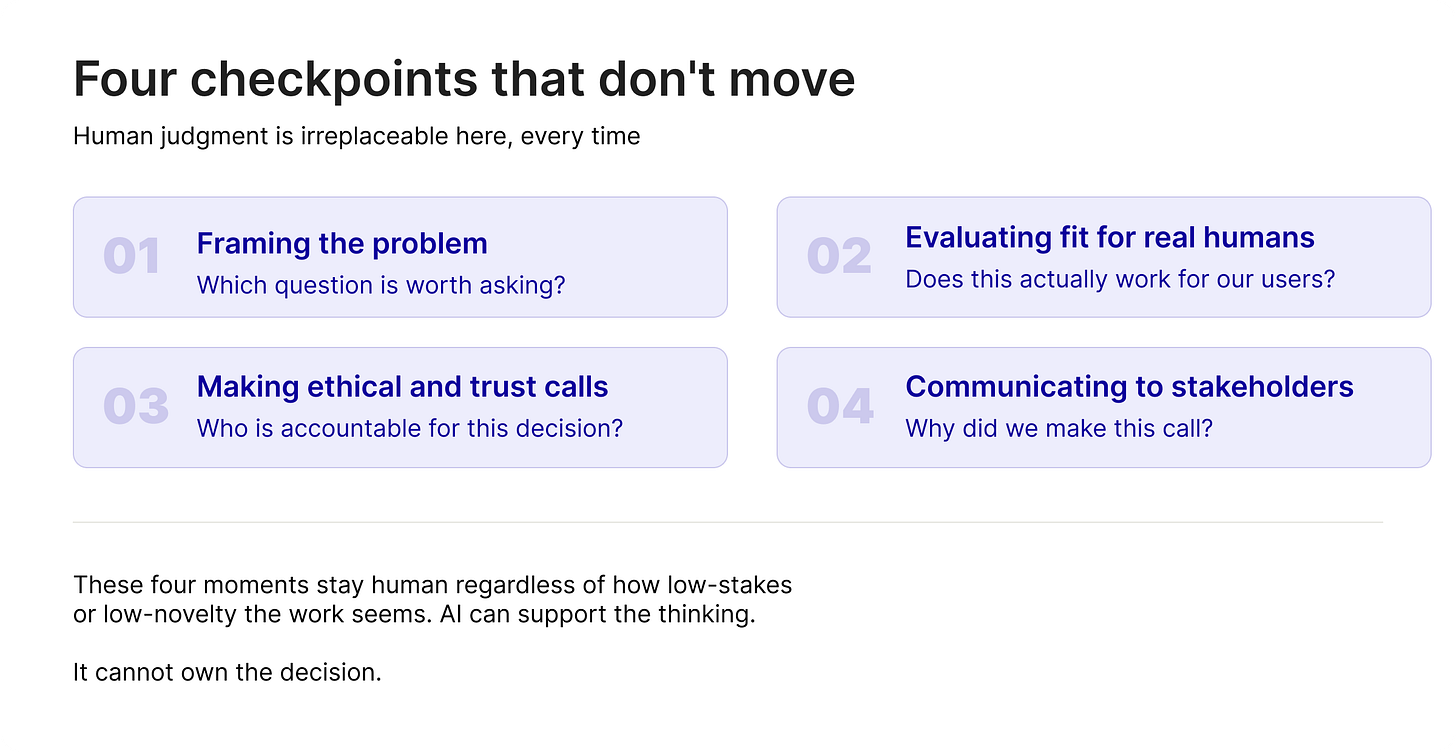

Four Checkpoints That Don’t Move

The zones sort out most decisions. But underneath them, there are four moments in any design process where you can’t hand the wheel to AI, no matter how low-stakes or routine the work seems.

The first is framing the problem. AI is good at answering questions. It is not good at deciding which question is worth asking. The moment where a team steps back and says “are we solving the right thing” is a human moment. Not because AI can’t generate options, but because the answer depends on things that aren’t in the prompt: what the organization actually needs right now, what users have been struggling with, what the team already tried and abandoned.

The second is evaluating fit for real humans. AI doesn’t know your users. It knows patterns across training data. The designer who has sat in research sessions, who has watched people struggle with a flow, who carries the memory of actual human reactions: that designer notices things in an AI-generated interface that a generalized model cannot. Whether something actually works for the specific humans who will use this specific product, that’s a human judgment call.

The third is making ethical and trust calls. Anything that touches how users feel about the product’s honesty, anything that involves tradeoffs between business goals and user interests, anything where the design encodes a value: those decisions need a person behind them. Not because AI will always get them wrong, but because someone has to be willing to stand behind the call. That’s not a job you can delegate to a model.

The fourth is communicating decisions to stakeholders. The “why” behind a design choice requires someone who understands what the room actually cares about, not just what was decided but which tradeoffs were weighed and why the team landed where it did. AI can draft the summary. The judgment call about what matters to the people listening, and how to say it so they can accept it, is human.

What to Do With This

Take your team’s current work and map it against these zones. Not every project. Pick three or four recurring work types and ask: where do we consistently treat this as low-stakes when it’s actually high-stakes? Where are we burning human review on low-novelty execution work when we could be moving faster?

The distribution you find will tell you something about where your practice is over-indexed on human involvement and where it’s under-indexed. Both cost you. The teams that get AI-native right aren’t the ones that use AI for everything. They’re the ones that know where it belongs and keep it out of where it doesn’t.

I don’t think most teams will get this right on the first pass. The zones will bleed into each other, and someone will argue that everything is high-stakes, or nothing is. But even a rough version of this framework is better than what most teams are running on now, which is gut feel and whoever pushes back the loudest. Most of those decisions still don’t come with instructions. At least now they can come with a starting point.

Leslie Sultani is a design leader writing about the intersection of AI, design practice, and organizational change.

Further Reading

State of AI in Design 2025 — Foundation Capital & Designer Fund. Data on where designers are actually using AI and where they’re not, which maps closely to the high-stakes/low-novelty zones in this framework.

The Evolution of UX Design in the Age of AI Platforms: From Creator to Choreographer — Ken Olewiler, UXmatters. The case for why curation and judgment are the skills that survive automation.

5 Design Skills to Sharpen in the AI Era — Figma. Research-backed breakdown of which design skills compound in value as AI handles more execution.