What AI Did to the Design Process

Every few weeks, someone declares the design process dead.

The most visible recent version came from Jenny Wen, who head of design for Claude at Anthropic. She wrote that the classic discover, diverge, converge sequence cannot survive a world where engineers spin up multiple AI coding agents and ship working versions before designers finish exploring. A week later, Sarah Gibbons at NN/g published the counter: the process isn’t dead, it’s compressed. The same discovery, distillation, and refinement still happens, just in an afternoon instead of a month.

They’re both right. And they’re both missing something.

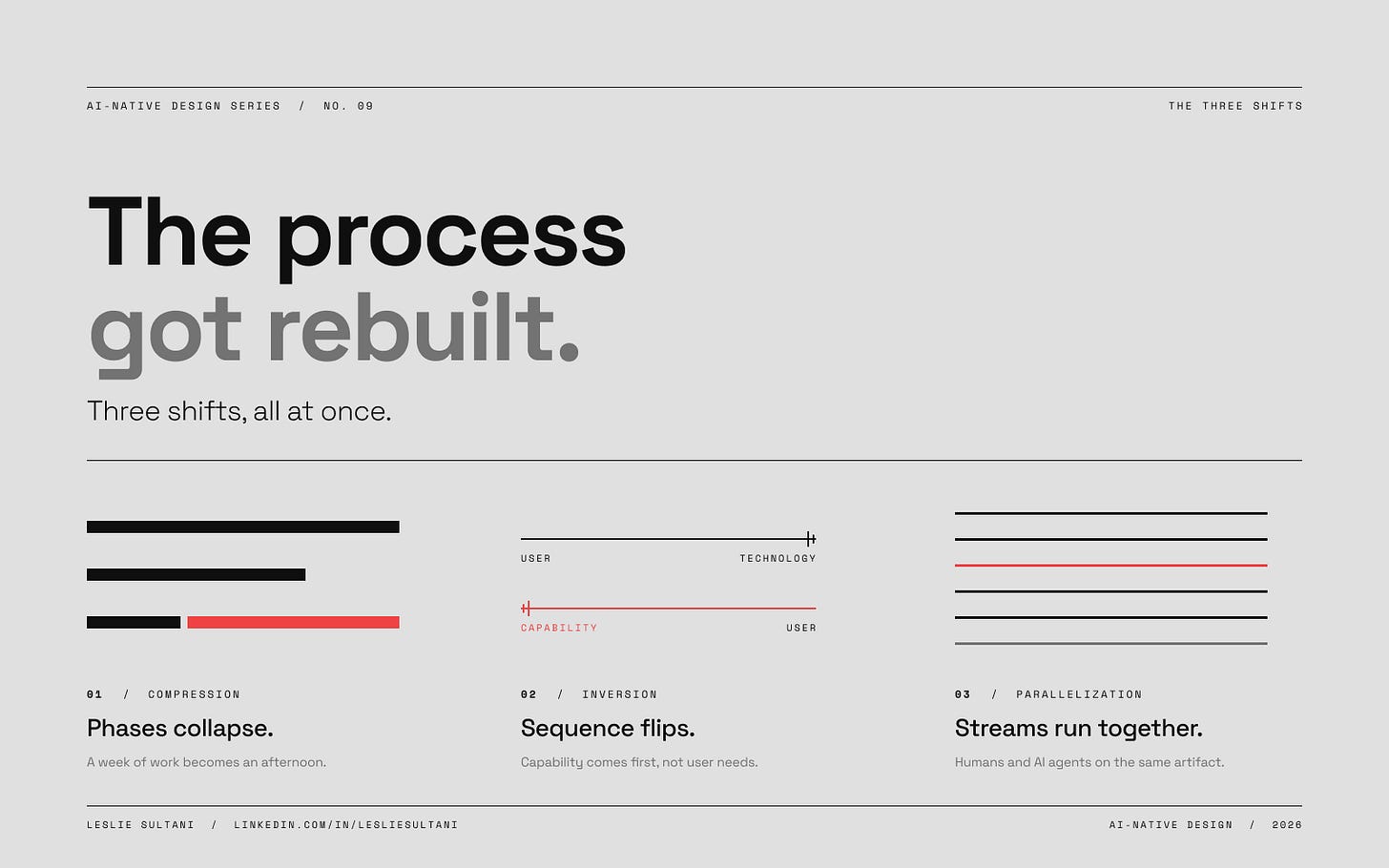

The design process didn’t die, and it didn’t just get faster. It got rebuilt around what AI is actually good at and what it isn’t. Three specific things have changed, and all three are happening at once in the teams that are moving fastest. Most teams aren’t noticing all three. That’s why their AI investment keeps producing output without producing progress.

The First Shift: Compression

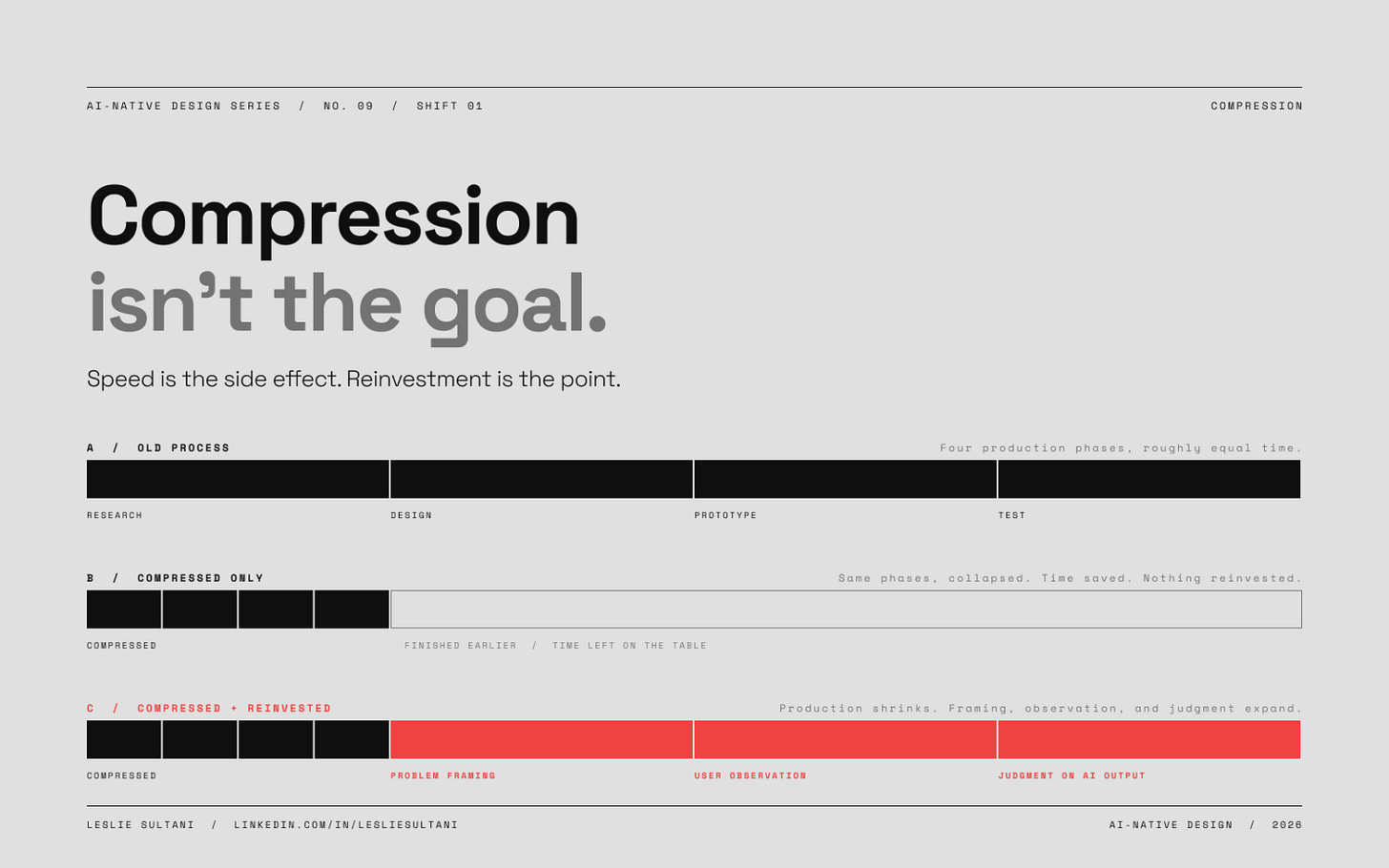

The most obvious change is that the phases of the process have collapsed in time.

Processing a week of research used to take another week. It now takes an afternoon. Competitive analysis used to take two weeks. A well-constructed prompt can produce it in a morning. Wireframe exploration, first-draft copy, visual directions, information architecture: every phase that was primarily about generating or processing information has compressed by roughly an order of magnitude.

The temptation at this point is to run the old process faster. Do what you used to do, just compress the timeline. Most teams do this. It’s the first mistake.

What actually happens in organizations moving well is that the time compression gets reinvested. The hours you used to spend making sense of research become hours you spend on problem framing. The days you used to spend on prototyping become days you spend watching users interact with the prototype. The teams getting the most out of compression aren’t the ones running a two-week sprint in three days. They’re the ones running a two-week sprint in three days and using the other nine to do the parts that were always rushed.

That’s the thing most playbooks miss. Compression without reinvestment is just a speed-up. Teams that treat AI as a way to finish earlier end up producing the same quality of work in less time. Teams that treat AI as a way to spend more time on the parts that matter produce work of a different quality entirely.

The Second Shift: Inversion

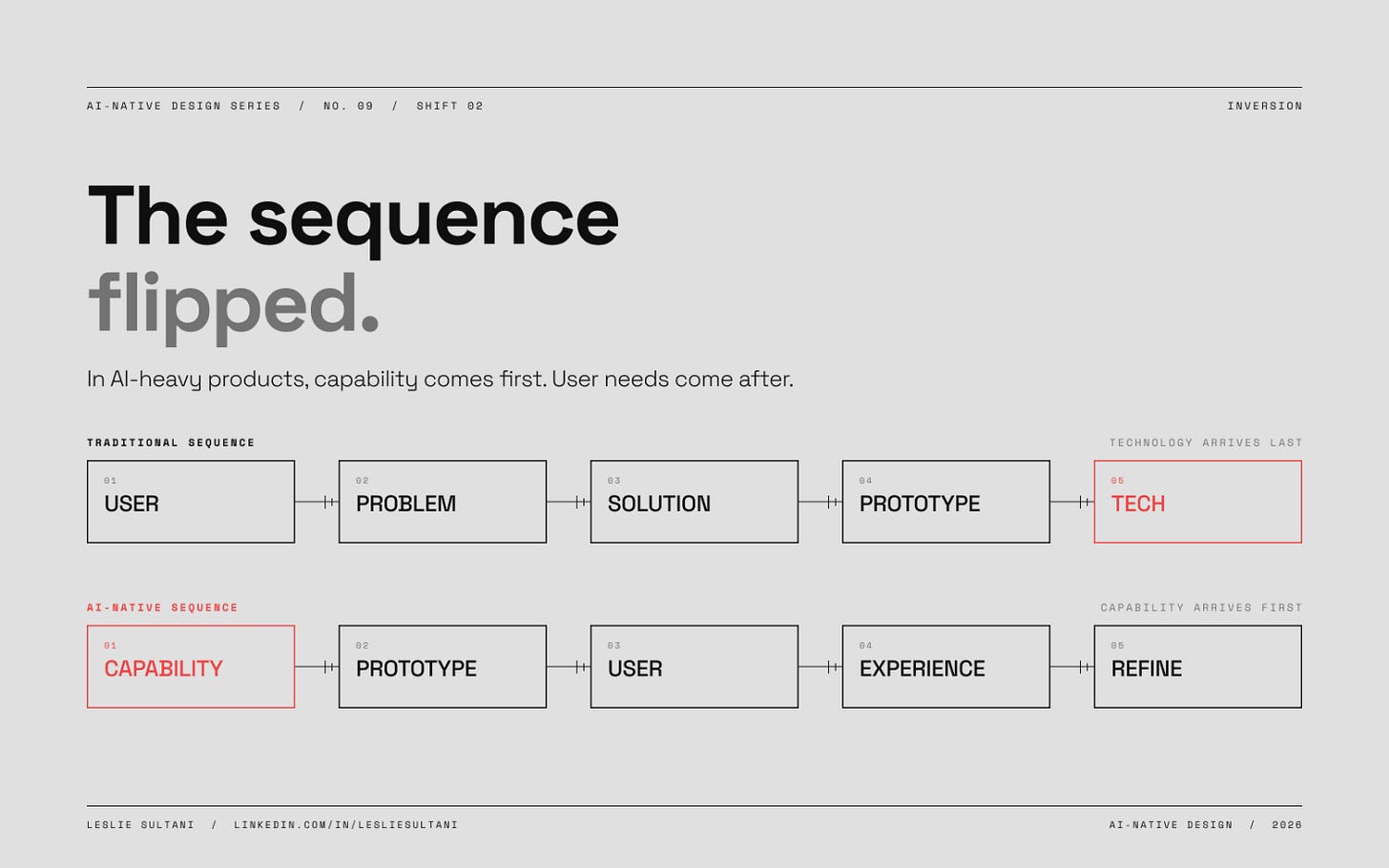

In certain kinds of products, especially AI-heavy ones, the sequence itself has flipped.

Henry Modisett, VP of Design at Perplexity, has described this directly. His team starts with capability exploration, not with user research. They build rough prototypes first, sometimes just command-line implementations, to see what the underlying model can actually do. Only then do they start thinking about the experience layer.

This reverses something that’s been structural to product design for decades. The sequence used to be: understand the user, define the problem, generate solutions, prototype, test. Technology came last, as a constraint or an enabler for the solution the team had designed. In AI-heavy products, this order doesn’t work, because the capability space itself is changing faster than any user research can track. You can’t design an experience around what an AI can do without first knowing what an AI can do, and that knowledge is only available through prototyping.

Karri Saarinen at Linear has made the same argument from a different angle. In “Design for the AI Age,” he writes that designers have to work forward to understand capabilities rather than backwards from user needs. He calls it “design is search.” The whole process is exploratory, not a waterfall. You’re searching through capability space and user need space at the same time, looking for the overlap. The prototype isn’t the end of the process. It’s the instrument you use to explore the process.

The inversion doesn’t apply to every product. A team designing a checkout flow still starts with user needs. But any team building with AI at the core of the experience has to know what the AI can do before they can design how users will relate to it. Teams that don’t invert this sequence end up designing experiences for capabilities that don’t exist yet, or missing capabilities that would have changed the whole frame.

The Third Shift: Parallelization

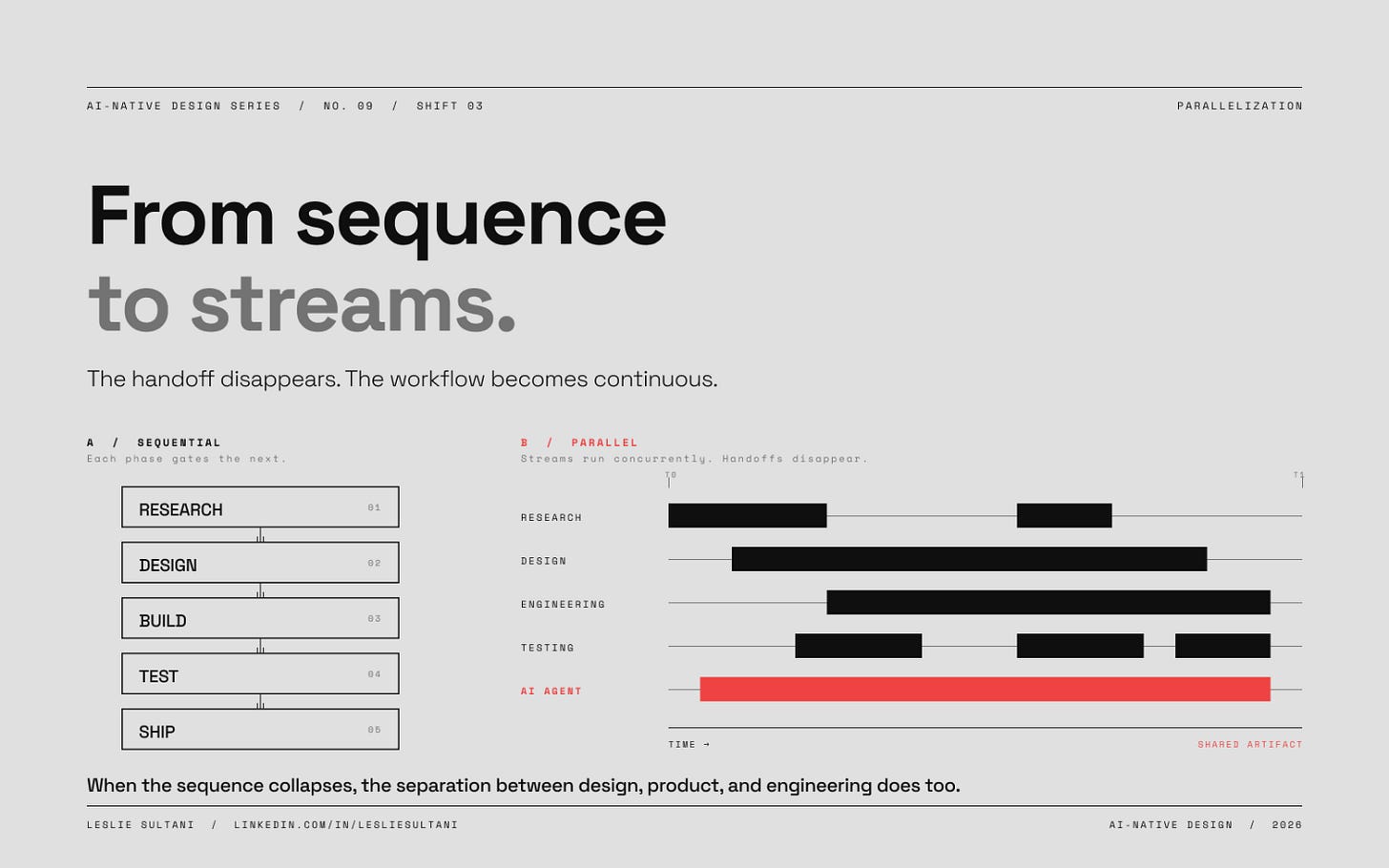

The old process was a sequence. Research, then design, then build, then test, then ship. Each phase gated the next.

What’s happening now in the most advanced teams is that these phases are running concurrently, across different people and different AI agents. A designer is prototyping while research is still coming in. An engineer is building a working version while the designer is still iterating on direction. Testing is happening continuously, not at the end. The handoff between phases, which used to be the critical moment of coordination, is disappearing in teams that have gone furthest with AI adoption.

Intercom is the clearest public example of what this looks like at scale. Their senior design leader Thom Rimmer has said that every designer at Intercom now has a development environment and ships to production. There’s no designer-to-engineer handoff in the old sense, because the designer is doing the work that used to be handed off. Tom Scott’s reporting on AI-native product designers in 2026 describes the full stack: an AI-first editor like Cursor, an AI coding agent, a coded design system, Figma Code Connect mapping components to code, and a GitHub review workflow shared with engineering. The process isn’t sequential anymore. It’s one continuous workflow with AI agents and humans operating on the same artifact at the same time.

The organizational implication of parallelization is bigger than people realize. If the sequential process is gone, then the traditional team structure that was built around it is also gone. The separate design, product, and engineering functions, each with their own artifacts and handoffs, were a coordination mechanism for a sequential process. When the sequence collapses, the separation does too. That part of the conversation is the one that gets most emotional and most politically charged inside organizations, because it touches how people think about their roles. The operational evidence is clear in the teams that are furthest along.

What Hasn’t Changed

Three shifts get most of the attention. The things that haven’t changed deserve more than they usually get.

Problem framing hasn’t changed. The moment where a team looks at everything they know and says “this is the right question to solve” is still a human moment. AI can produce research, generate options, surface patterns, and compare hypotheses. It cannot tell a team which problem is worth their organizational attention. The framing question requires strategic judgment that isn’t in any prompt, and it’s the part of the process that compresses least of all. If anything, good framing has become more important, because the downstream process can now execute faster on the wrong problem.

Real user empathy hasn’t changed either. Watching a person struggle with something you thought was obvious, hearing the words they use to describe what they’re seeing, noticing the moment they hesitate: these are things AI cannot do for the team. It can distill what users said in a research session. It cannot replace the embodied experience of having been in the room. The teams that still put their designers in front of real users, not in front of AI summaries of real users, notice things the others miss.

Judgment about AI output is new enough that teams are still discovering how important it is. An AI-generated prototype can look polished and test poorly, because something about the interaction model is subtly wrong in a way that only a designer with deep product knowledge can catch. The skill of recognizing what looks right but isn’t is a human skill, and it gets harder as AI output gets more polished. Teams that over-trust AI output at this step are the ones producing what critics have started calling “AI slop”: work that looks fine at a glance and fails in use.

Responsibility for shipping hasn’t changed. Someone has to decide that the work is ready and own the consequences if it isn’t. No AI agent owns the consequences of a decision. The person shipping is still answerable for the quality of what ships, and in teams where the designer now ships directly to production, that responsibility has expanded rather than contracted.

The Pattern Underneath

The design process didn’t die. It didn’t just get compressed either. What happened is more interesting than either framing.

The work got redistributed. Phases that used to be sequential now run concurrently. Activities that were once rare have become routine. The judgment calls designers made quietly in the past are now the central, visible work of the practice. The teams that have internalized this are structuring their practice around it. The teams that haven’t are running an old process faster and wondering why the speed isn’t turning into better outcomes.

Here’s the diagnostic question for any team that wants to know where they actually stand. Look at what your team spent time on last quarter. If the distribution looks similar to what it would have looked like three years ago, just with fewer hours in each bucket, you’re running the old process compressed. If it looks different, if more time has shifted toward problem framing and user observation and judgment on AI output, and less toward the production work that AI now does well, you’ve started the rebuild.

Most teams are still running the old process compressed. A smaller number have started rebuilding. An even smaller number have rebuilt enough that the shape of their work would be unrecognizable to someone from the pre-AI era.

The design process didn’t die. Jenny Wen was right about that. It didn’t just get compressed, either. Sarah Gibbons was right about that. What happened is that the process stopped being a sequence of steps and became something closer to a set of simultaneous practices, distributed across humans and AI agents, with the weight of the work concentrated in the moments that actually require human judgment.

That’s the rebuild. Most organizations haven’t finished it yet. The ones that do will have a different kind of design practice than the one the industry inherited.

Leslie Sultani is a design leader and player-coach writing about the intersection of AI, design practice, and organizational change. Former CPO, UX engineer, and founder of a FinTech AI platform. Read the full AI-Native Design Series at LinkedIn, Substack or Medium.

Further Reading

The Design Process Is Dead. Here’s What’s Replacing It. — Lenny Rachitsky interviewing Jenny Wen, head of design for Claude at Anthropic. The most widely-shared argument for why the traditional discover-diverge-converge process can’t survive in AI-native product teams. The starting point for this article.

Design Process Isn’t Dead, It’s Compressed — Sarah Gibbons and Huei-Hsin Wang, NN/g. The definitive response to the “throw out the design process” crowd. What looks like skipping steps is experienced designers running compressed versions. The compression argument in this article.

Design for the AI Age — Karri Saarinen, Linear. Saarinen’s case for why designers need to reinvent their processes by working forward from capability rather than backward from user needs. Source for the “design is search” framing.

Henry Modisett on Quality — Linear’s Conversations on Quality series featuring Henry Modisett, VP of Design at Perplexity, on fast iteration and the capability-first approach at Perplexity.

Good from Afar, But Far from Good: AI Prototyping in Real Design Contexts — Huei-Hsin Wang and Megan Brown, NN/g. Rigorous evaluation of what AI prototyping tools actually produce when they meet real design scenarios.

Operating as an AI-Native Product Designer in 2026 — Tom Scott, Verified Insider. The practitioner account behind the parallelization section, with specific details on the AI-native product designer’s toolkit and workflow.

Design at Intercom — Tom Scott interviewing Thom Rimmer, Verified Insider. Intercom’s senior design leader on why every designer at the company now ships to production, and how the handoff collapsed.

Generative UI and Outcome-Oriented Design — Kate Moran and Sarah Gibbons, NN/g. The bigger shift behind this article: from designing interfaces to designing adaptive systems that respond to user goals.